The 10GBit network experiment part 3

I declared the attempts with the Asus XG-C100C card failed, but 10GBit network cards are rather not inexpensive, there is then not so much choice.

10GTek 10GBit card

After a long research I decided to buy the 10GTek 10GB card with the Intel X520 chip, which are priced around the Asus card. However, these are cards with so-called SFP+ connector and the card can not switch to 5 or 2.5 GBit.

After a long research I decided to buy the 10GTek 10GB card with the Intel X520 chip, which are priced around the Asus card. However, these are cards with so-called SFP+ connector and the card can not switch to 5 or 2.5 GBit.

But since I have the server next to me anyway, since I am the only user, this was not an obstacle.

So I got a DAC cable and connected the two cards directly. Of course again different networks for the 10GBit connection.

For Windows I had to download and install drivers from the Intel site, while for Openmediavault it worked right away.

The first result

The cards are longer and therefore occupy an X16 expansion slot. I could live with that both with the NAS, because here I don’t need a graphics card, and with my Windows PC with the Ryzen processor, such a slot is still free.

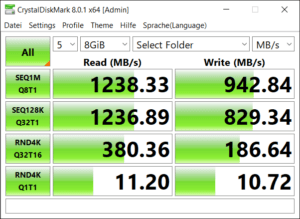

Nevertheless, the first results I achieved with it are very impressive. We are definitely at transfer rates that one would imagine with a 10GBit network.

Of course, with only 8GB size of the test file, we mainly measure how fast this test file is stored in RAM, but this is only the first step. And iperf has confirmed these values.

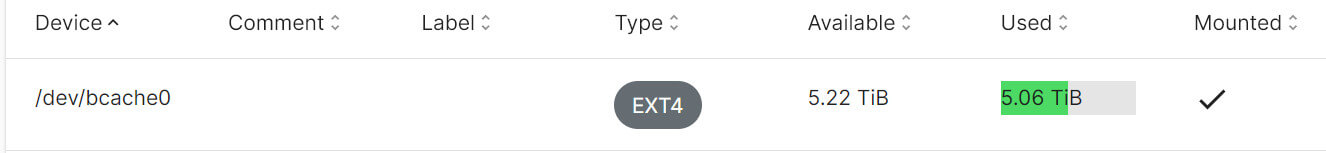

The BCache Drama

Of course, the values of a 10GBit network are not achievable if files are very large and they have to be written to the hard disks in between. This will naturally lead to slowdowns, because mechanical hard disks are far away from such speeds.

BCache should help out here. Together with an appropriate fast MVME SSD files should be cached, so that high transfer rates are ensured.

You can hardly find any articles about Bcache except the official documentation. But I found one, which even contained the hint, that it might be possible to continue using my data disks. But that did not work.

Also it was not possible to initialize the 3 disks with make-bcache, even if the documentation says you can add more devices to the command line. So I gave up after several tries to continue my Snapraid disk network. I decided to create a regular Raid5 and initialize the md0 device with bcache.

Also it was not possible to initialize the 3 disks with make-bcache, even if the documentation says you can add more devices to the command line. So I gave up after several tries to continue my Snapraid disk network. I decided to create a regular Raid5 and initialize the md0 device with bcache.

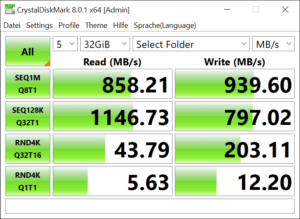

And that finally worked and even with test files around 32 GB, much more than the installed RAM I get very good values. And even when I archive a larger video project on the server I still get a data transfer rate between 500-600MB/second.

Conclusion

The conversion took some effort, but I am quite satisfied with the result. With this I can do without local storage of photos and videos. The backup is done as before by USBBackup with Openmediavault.

ciao tuxoche